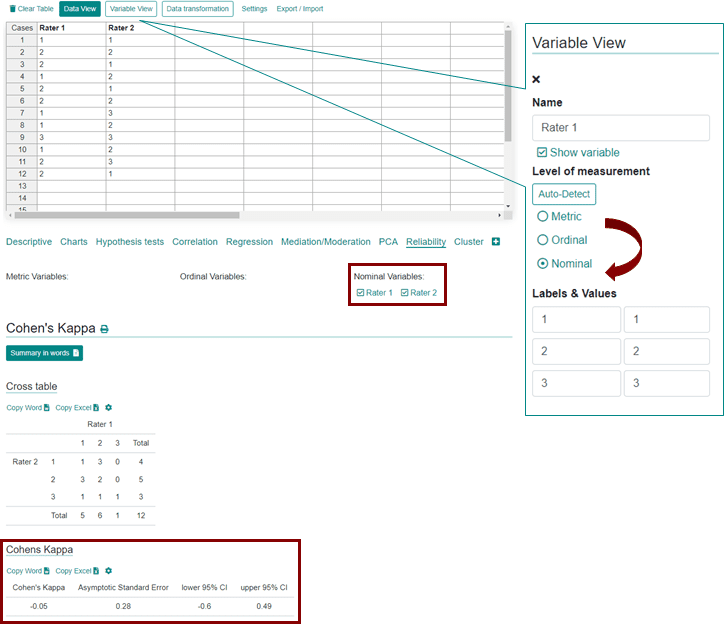

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

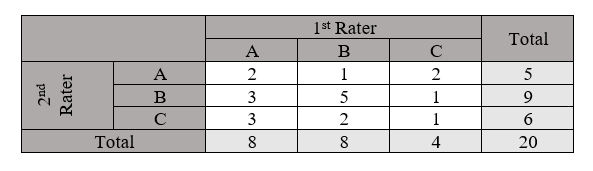

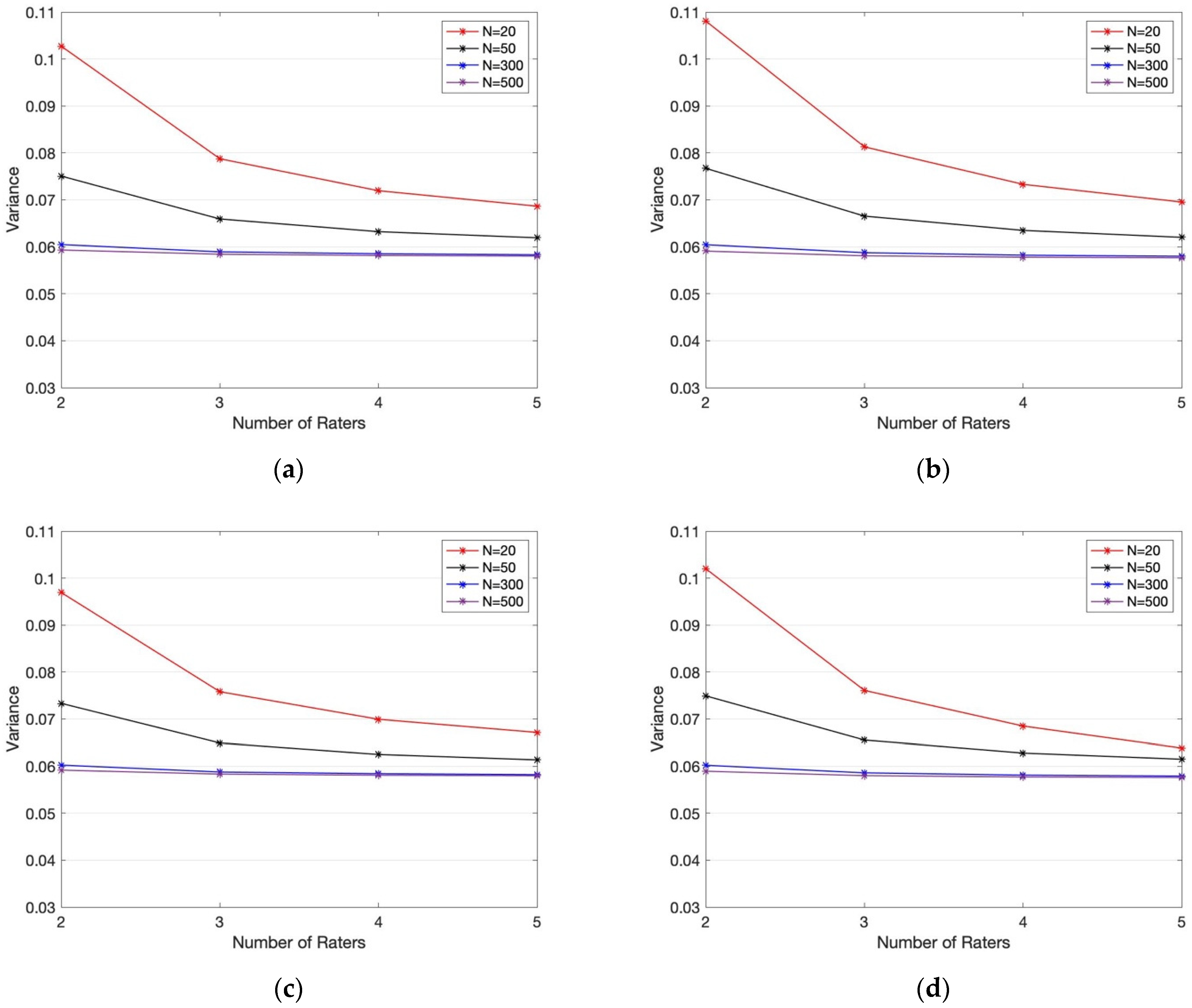

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter- Rater Agreement of Binary Outcomes and Multiple Raters | HTML

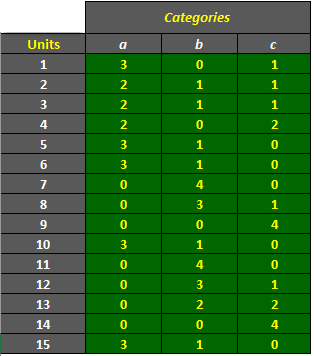

AgreeStat/360: computing weighted agreement coefficients (Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) with ratings in the form of a distribution of raters by subject and category

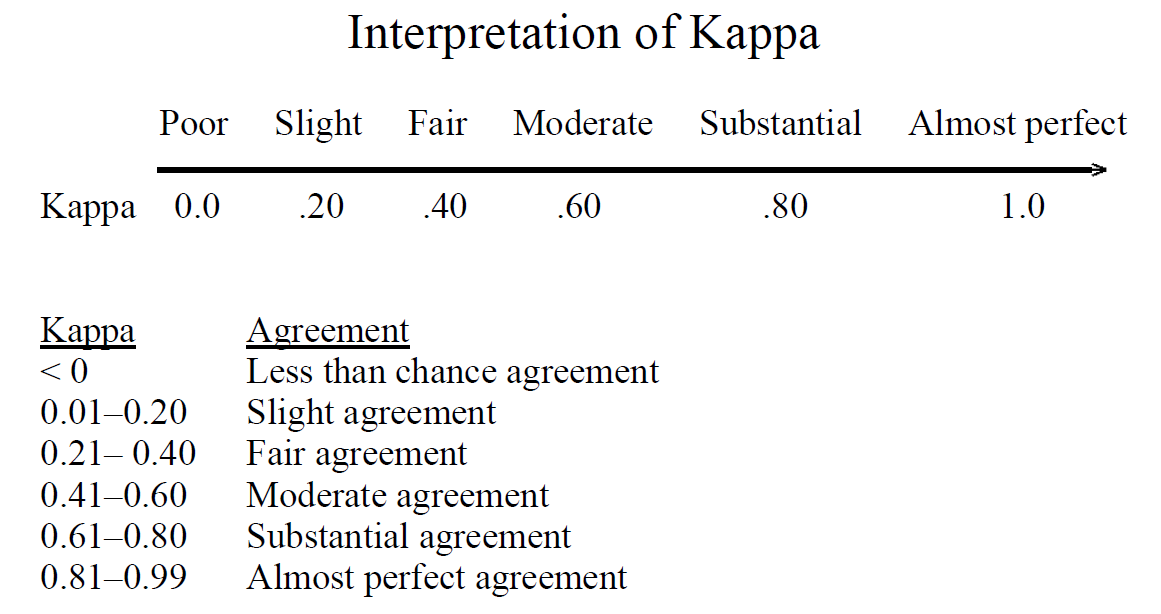

![Fleiss' Kappa and Inter rater agreement interpretation [24] | Download Table Fleiss' Kappa and Inter rater agreement interpretation [24] | Download Table](https://www.researchgate.net/publication/281652142/figure/tbl3/AS:613853020819479@1523365373663/Fleiss-Kappa-and-Inter-rater-agreement-interpretation-24.png)

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/ga-bamq7Qcs/maxresdefault.jpg)